k8s-goharbor

Harbor registry

Template version:v25-10-30

Helm charts used:harbor/harbor v1.18.0

This template contains the configuration files needed to run Harbor in a Kubernetes cluster.

Harbor is an open source registry that secures artifacts with policies and role-based access control, ensures images are scanned and free from vulnerabilities, and signs images as trusted. Harbor, a CNCF Graduated project, delivers compliance, performance, and interoperability to help you consistently and securely manage artifacts across cloud native compute platforms like Kubernetes and Docker.

Template override parameters

File _values-tpl.yaml contains template configuration parameters and their default values:

## _values-tpl.yaml## cskygen template default values file#_tplname: k8s-goharbor_tpldescription: GoHarbor opensource registry_tplversion: 25-10-30## Values to override### k8s cluster credentials kubeconfig filekubeconfig: config-k8s-modnamespace:## k8s namespace namename: goharbor## Service domain namedomain: cskylab.netpublishing:## External urlurl: goharbor.mod.cskylab.net## Password for administrative userpassword: 'NoFear21'certificate:## Cert-manager clusterissuerclusterissuer: ca-test-internal## Local storage PV's node affinity (Configured in pv*.yaml)localpvnodes: # (k8s node names)all_pv: k8s-mod-n1# k8s nodes domain namedomain: cskylab.net# k8s nodes local administratorlocaladminusername: koslocalrsyncnodes: # (k8s node names)all_pv: k8s-mod-n2# k8s nodes domain namedomain: cskylab.net# k8s nodes local administratorlocaladminusername: kos

TL;DR

Prepare LVM Data services for PV's:

Install namespace and charts:

# Pull charts to './charts/' directory./csdeploy.sh -m pull-charts# Install./csdeploy.sh -m install# Check status./csdeploy.sh -l

Run:

- Published at:

{{ .publishing.url }} - Username:

admin - Password:

{{ .publishing.password }}

Prerequisites

- Administrative access to Kubernetes cluster.

- Helm v3.

LVM Data Services

Data services are supported by the following nodes:

| Data service | Kubernetes PV node | Kubernetes RSync node |

|---|---|---|

/srv/{{ .namespace.name }} | {{ .localpvnodes.all_pv }} | {{ .localrsyncnodes.all_pv }} |

PV node is the node that supports the data service in normal operation.

RSync node is the node that receives data service copies synchronized by cron-jobs for HA.

To create the corresponding LVM data services, execute from your mcc management machine the following commands:

## Create LVM data services in PV node#echo \&& echo "******** START of snippet execution ********" \&& echo \&& ssh {{ .localpvnodes.localadminusername }}@{{ .localpvnodes.all_pv }}.{{ .localpvnodes.domain }} \'sudo cs-lvmserv.sh -m create -qd "/srv/{{ .namespace.name }}" \&& mkdir "/srv/{{ .namespace.name }}/data/jobservice" \&& mkdir "/srv/{{ .namespace.name }}/data/postgresql" \&& mkdir "/srv/{{ .namespace.name }}/data/redis" \&& mkdir "/srv/{{ .namespace.name }}/data/registry" \&& mkdir "/srv/{{ .namespace.name }}/data/trivy"' \&& echo \&& echo "******** END of snippet execution ********" \&& echo

## Create LVM data services in RSync node#echo \&& echo "******** START of snippet execution ********" \&& echo \&& ssh {{ .localrsyncnodes.localadminusername }}@{{ .localrsyncnodes.all_pv }}.{{ .localrsyncnodes.domain }} \'sudo cs-lvmserv.sh -m create -qd "/srv/{{ .namespace.name }}" \&& mkdir "/srv/{{ .namespace.name }}/data/jobservice" \&& mkdir "/srv/{{ .namespace.name }}/data/postgresql" \&& mkdir "/srv/{{ .namespace.name }}/data/redis" \&& mkdir "/srv/{{ .namespace.name }}/data/registry" \&& mkdir "/srv/{{ .namespace.name }}/data/trivy"' \&& echo \&& echo "******** END of snippet execution ********" \&& echo

To delete the corresponding LVM data services, execute from your mcc management machine the following commands:

## Delete LVM data services in PV node#echo \&& echo "******** START of snippet execution ********" \&& echo \&& ssh {{ .localpvnodes.localadminusername }}@{{ .localpvnodes.all_pv }}.{{ .localpvnodes.domain }} \'sudo cs-lvmserv.sh -m delete -qd "/srv/{{ .namespace.name }}"' \&& echo \&& echo "******** END of snippet execution ********" \&& echo

## Delete LVM data services in RSync node#echo \&& echo "******** START of snippet execution ********" \&& echo \&& ssh {{ .localrsyncnodes.localadminusername }}@{{ .localrsyncnodes.all_pv }}.{{ .localrsyncnodes.domain }} \'sudo cs-lvmserv.sh -m delete -qd "/srv/{{ .namespace.name }}"' \&& echo \&& echo "******** END of snippet execution ********" \&& echo

Persistent Volumes

Review values in all Persistent volume manifests with the name format ./pv-*.yaml.

The following PersistentVolume & StorageClass manifests are applied:

# PV manifestspv-jobservice.yamlpv-postgresql.yamlpv-redis.yamlpv-registry.yamlpv-trivy.yaml

The node assigned in nodeAffinity section of the PV manifest, will be used when scheduling the pod that holds the service.

How-to guides

Pull Charts

To pull charts, change the repositories and charts needed in variable source_charts inside the script csdeploy.sh and run:

# Pull charts to './charts/' directory./csdeploy.sh -m pull-charts

When pulling new charts, all the content of ./charts directory will be removed, and replaced by the new pulled charts.

After pulling new charts redeploy the new versions with: ./csdeploy -m update.

Install

To create namespace, persistent volume and install the chart:

# Create namespace, PV and install chart./csdeploy.sh -m install

Notice that PV's are not namespaced. They are deployed at cluster scope.

Update

To update chart settings, change values in the file values-harbor.yaml.

Redeploy or upgrade the chart by running:

# Redeploy or upgrade chart./csdeploy.sh -m update

Uninstall

To uninstall the chart, remove namespace and PV run:

# Uninstall chart, remove PV and namespace./csdeploy.sh -m uninstall

Remove

This option is intended to be used only to remove the namespace when chart deployment is failed. Otherwise, you must run ./csdeploy.sh -m uninstall.

To remove PV, namespace and all its contents run:

# Remove PV namespace and all its contents./csdeploy.sh -m remove

Display status

To display namespace, persistence and chart status run:

# Display namespace, persistence and chart status:./csdeploy.sh -l

Backup & data protection

Backup & data protection must be configured on file cs-cron_scripts of the node that supports the data services.

RSync HA copies

Rsync cronjobs are used to achieve service HA for LVM data services that supports the persistent volumes. The script cs-rsync.sh perform the following actions:

- Take a snapshot of LVM data service in the node that supports the service (PV node)

- Copy and syncrhonize the data to the mirrored data service in the kubernetes node designed for HA (RSync node)

- Remove snapshot in LVM data service

To perform RSync manual copies on demand, execute from your mcc management machine the following commands:

Warning: You should not make two copies at the same time. You must check the scheduled jobs in

cs-cron-scriptsand disable them if necesary, in order to avoid conflicts.

## RSync data services#echo \&& echo "******** START of snippet execution ********" \&& echo \&& ssh {{ .localpvnodes.localadminusername }}@{{ .localpvnodes.all_pv }}.{{ .localpvnodes.domain }} \'sudo cs-rsync.sh -q -m rsync-to -d /srv/{{ .namespace.name }} \-t {{ .localrsyncnodes.all_pv }}.{{ .namespace.domain }}' \&& echo \&& echo "******** END of snippet execution ********" \&& echo

RSync cronjobs:

The following cron jobs should be added to file cs-cron-scripts on the node that supports the service (PV node). Change time schedule as needed:

################################################################################# /srv/{{ .namespace.name }} - RSync LVM data services#################################################################################### RSync path: /srv/{{ .namespace.name }}## To Node: {{ .localrsyncnodes.all_pv }}## At minute 0 past every hour from 8 through 23.# 0 8-23 * * * root run-one cs-lvmserv.sh -q -m snap-remove -d /srv/{{ .namespace.name }} >> /var/log/cs-rsync.log 2>&1 ; run-one cs-rsync.sh -q -m rsync-to -d /srv/{{ .namespace.name }} -t {{ .localrsyncnodes.all_pv }}.{{ .namespace.domain }} >> /var/log/cs-rsync.log 2>&1

Restic backup

Restic can be configured to perform data backups to local USB disks, remote disk via sftp or cloud S3 storage.

To perform on-demand restic backups execute from your mcc management machine the following commands:

Warning: You should not launch two backups at the same time. You must check the scheduled jobs in

cs-cron-scriptsand disable them if necesary, in order to avoid conflicts.

## Restic backup data services#echo \&& echo "******** START of snippet execution ********" \&& echo \&& ssh {{ .localpvnodes.localadminusername }}@{{ .localpvnodes.all_pv }}.{{ .localpvnodes.domain }} \'sudo cs-restic.sh -q -m restic-bck -d /srv/{{ .namespace.name }} -t {{ .namespace.name }}' \&& echo \&& echo "******** END of snippet execution ********" \&& echo

To view available backups:

echo \&& echo "******** START of snippet execution ********" \&& echo \&& ssh {{ .localpvnodes.localadminusername }}@{{ .localpvnodes.all_pv }}.{{ .localpvnodes.domain }} \'sudo cs-restic.sh -q -m restic-list -t {{ .namespace.name }}' \&& echo \&& echo "******** END of snippet execution ********" \&& echo

Restic cronjobs:

The following cron jobs should be added to file cs-cron-scripts on the node that supports the service (PV node). Change time schedule as needed:

################################################################################# /srv/{{ .namespace.name }}- Restic backups#################################################################################### Data service: /srv/{{ .namespace.name }}## At minute 30 past every hour from 8 through 23.# 30 8-23 * * * root run-one cs-lvmserv.sh -q -m snap-remove -d /srv/{{ .namespace.name }} >> /var/log/cs-restic.log 2>&1 ; run-one cs-restic.sh -q -m restic-bck -d /srv/{{ .namespace.name }} -t {{ .namespace.name }} >> /var/log/cs-restic.log 2>&1 && run-one cs-restic.sh -q -m restic-forget -t {{ .namespace.name }} -f "--keep-hourly 6 --keep-daily 31 --keep-weekly 5 --keep-monthly 13 --keep-yearly 10" >> /var/log/cs-restic.log 2>&1

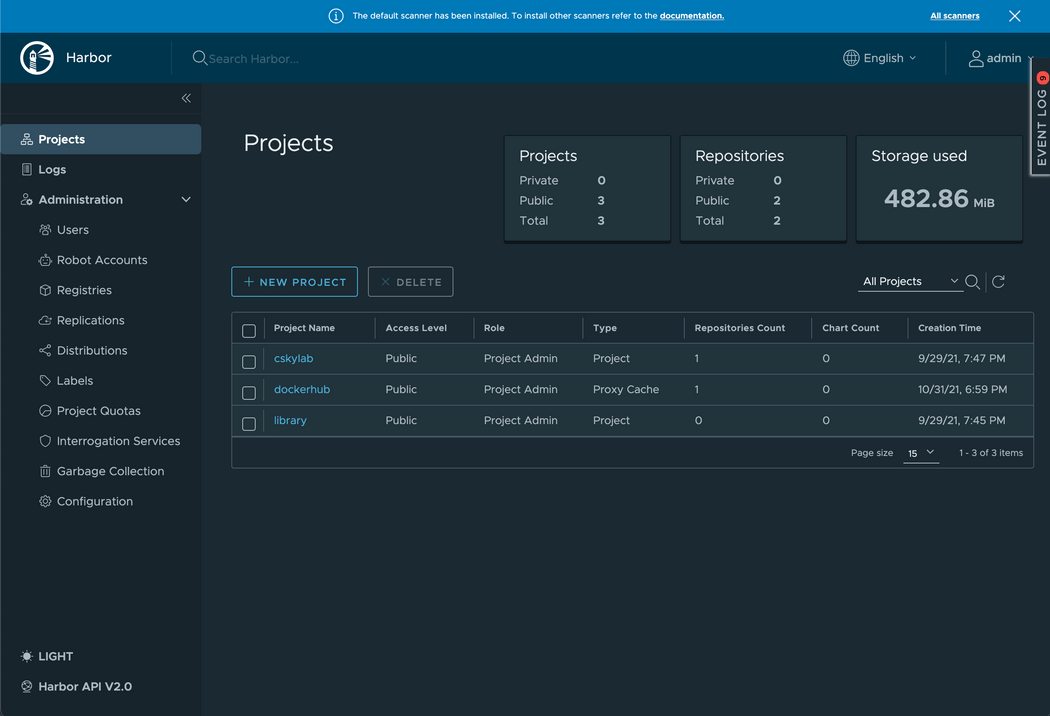

Create private registry

To create a private registry repository (Ex. cskylab) follow these steps:

Login to Harbor with admin account:

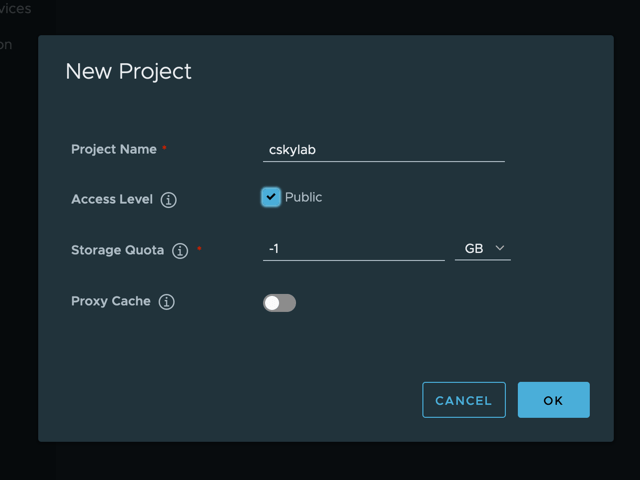

Go to Projects and select + NEW PROJECT

Enter your private registry information:

Your private registry repository is now accesible via '{{ .publishing.url }}/cskylab'

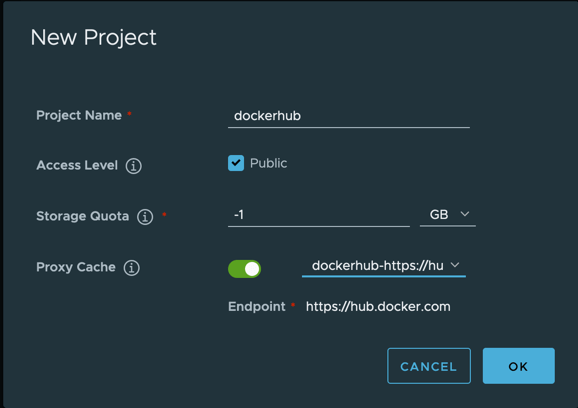

Create dockerhub proxy

To create the dockerhub registry proxy follow these steps:

Login to Harbor with admin account:

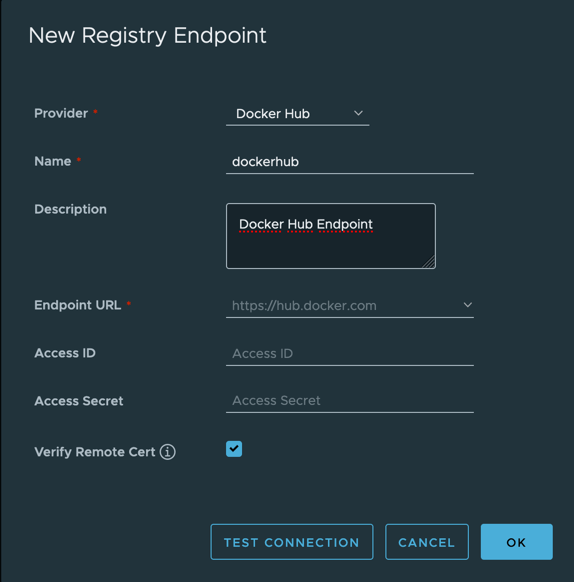

Before creating a proxy cache project, create a Harbor registry endpoint for the proxy cache project to use.

Go to Registries and select + NEW ENDPOINT

Test connection and create endpoint

After registry endpoint is created you can reference it in the new dockerhub proxy cache repository .

To create dockerkub proxy cache repository, go to Projects, select + NEW PROJECT and enter the following information:

Your dockerhub proxy repository is now accesible via '{{ .publishing.url }}/dockerhub'

To start using the proxy cache, configure your docker pull commands or pod manifests to reference the proxy cache project by adding {{ .publishing.url }}/dockerhub/ as a prefix to the image tag. For example:

docker pull {{ .publishing.url }}/dockerhub/goharbor/harbor-core:dev

To pull official images or from single level repositories, make sure to include the library namespace. For example:

docker pull {{ .publishing.url }}/dockerhub/library/nginx:latest

Upgrade PostgreSQL database version

- Backup Running Container

The pg_dumpall utility is used for writing out (dumping) all of your PostgreSQL databases of a cluster. It accomplishes this by calling the pg_dump command for each database in a cluster, while also dumping global objects that are common to all databases, such as database roles and tablespaces.

The official PostgreSQL Docker image come bundled with all of the standard utilities, such as pg_dumpall, and it is what we will use in this tutorial to perform a complete backup of our database server.

If your Postgres server is running as a Kubernetes Pod, you will execute one of the following commands:

# Server dump with postgres userkubectl -n {{ .namespace.name }} exec -i service/postgres -- /bin/bash -c "PGPASSWORD='{{ .publishing.password }}' pg_dumpall -U postgres" > postgresql.dump# Database backup with harbor userkubectl -n {{ .namespace.name }} exec -i service/postgres -- /bin/bash -c "PGPASSWORD='{{ .publishing.password }}' pg_dump -U harbor -d registry -F c" > harbor.backup

- Deploy New Postgres Image in a limited namespace

The second step is to deploy a new Postgress container using the updated image version. This container MUST NOT mount the same volume from the older Postgress container. It will need to mount a new volume for the database.

Note: If you mount to a previous volume used by the older Postgres server, the new Postgres server will fail. Postgres requires the data to be migrated before it can load it.

To deploy the new version on an empty volume:

- Uninstall the namespace containing the PostgreSQL service (keycloakx)

- Delete the PostgreSQL data service

- Re-Create the PostgreSQL data service

- Change

csdeploy.shfile commenting all helm pull deploying charts and runcsdeploy.sh - m pull-charts - Rename all

mod-xxxx.yamlexceptmod-postgresql.yaml - Launch the namespace with

csdeploy.sh - m installdeploying a new PostgreSQL server

- Import PostgreSQL Dump into New Pod With the new Postgres container running with a new volume mount for the data directory, you will use the psql command to import the database dump file. During the import process Postgres will migrate the databases to the latest system schema.

# Server dumpkubectl -n {{ .namespace.name }} exec -i service/postgres -- /bin/bash -c "PGPASSWORD='{{ .publishing.password }}' psql -U postgres" < postgresql.dump# Database backupkubectl -n {{ .namespace.name }} exec -i service/postgres -- /bin/bash -c "PGPASSWORD='{{ .publishing.password }}' pg_restore -U postgres -d registry --no-owner --role=harbor" < harbor.backup

- Upgrade Your PostgreSQL Passwords to SCRAM

This step is needed when migrating from bitnami PostgreSQL charts to manifest based on official images.

(More information at https://www.crunchydata.com/blog/how-to-upgrade-postgresql-passwords-to-scram)

- Open terminal from

postgres-0pod or service. - Enter psql console with user postgres:

psql -U postgres

- Using the command-line interface from psql, you can use the \password command, i.e:

\password

Or if you want to set the password for someone else on your system:

\password username

- You will be prompted to enter a new password. This new password will be converted to a SCRAM verifier, and the upgrade for this user will be complete.

- Deploy the namespace with all charts & manifests

Once the PosgreSQL container is running with the new version and dumped data successfully restored, the namespace can be re-started with all its charts:

- Uninstall the namespace

- Change

csdeploy.shfile un-commenting all helm pull deploying charts lines - Re-Import all charts by running

csdeploy.sh - m pull-charts - Review & rename all

mod-xxxx.yaml - Deploy the namespace by running

csdeploy.sh -m install

Utilities

Passwords and secrets

Generate passwords and secrets with:

# Screenecho $(head -c 512 /dev/urandom | LC_ALL=C tr -cd 'a-zA-Z0-9' | head -c 16)# File (without newline)printf $(head -c 512 /dev/urandom | LC_ALL=C tr -cd 'a-zA-Z0-9' | head -c 16) > RESTIC-PASS.txt

Change the parameter head -c 16 according with the desired length of the secret.

Reference

To learn more see:

Helm charts and values

| Chart | Values |

|---|---|

| harbor/harbor | values-harbor.yaml |

Scripts

cs-deploy

Purpose:GoHarbor registry.Usage:sudo csdeploy.sh [-l] [-m <execution_mode>] [-h] [-q]Execution modes:-l [list-status] - List current status.-m <execution_mode> - Valid modes are:[pull-charts] - Pull charts to './charts/' directory.[install] - Create namespace, PV's and install charts.[update] - Redeploy or upgrade charts.[uninstall] - Uninstall charts, remove PV's and namespace.[remove] - Remove PV's namespace and all its contents.Options and arguments:-h Help-q Quiet (Nonstop) execution.Examples:# Pull charts to './charts/' directory./csdeploy.sh -m pull-charts# Create namespace, PV's and install charts./csdeploy.sh -m install# Redeploy or upgrade charts./csdeploy.sh -m update# Uninstall charts, remove PV's and namespace./csdeploy.sh -m uninstall# Remove PV's namespace and all its contents./csdeploy.sh -m remove# Display namespace, persistence and charts status:./csdeploy.sh -l

License

Copyright © 2025 cSkyLab.com ™

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.